Anesthesiology News

The 2019 annual meeting of the Society for Technology in Anesthesia (STA) took place this past January at the Four Seasons Resort in Scottsdale, Ariz. Clinicians and industry members came together for four days to learn about the latest technology innovations in our specialty. The theme of the meeting was “Mind and Machine,” with each session highlighting ways in which our specialty is being driven forward by the deep and growing role of machine learning, computer-based simulation and integrated electronic health records (EHRs).

The meeting opened with a keynote by Ben Ransford, PhD, of Virta Labs, who talked about “Hidden Cybersecurity Risks of Hyperconnected Healthcare.” Dr. Ransford gave a broad overview of the security threats facing the modern health care system, from replay and side-channel attacks on pacemakers to the threat of ransomware and hardware/software obsolescence for health care organizations.

The first point that Dr. Ransford highlighted was the difference between software and hardware, and how medical devices are rapidly changing to become more software driven. This allows for rapid development and easier changes to functionality, but at the cost of increased complexity. With an average of 14 medical devices per bed, 90% of which are or will be connected to a network, the security challenges are formidable. Hospitals face a multitude of vulnerabilities, from devices running obsolete operating systems that have unpatched vulnerabilities and no plan by manufacturers to apply upgrades, to ransomware attacks that take advantage of social engineering techniques to spread crippling computer viruses within the organization.

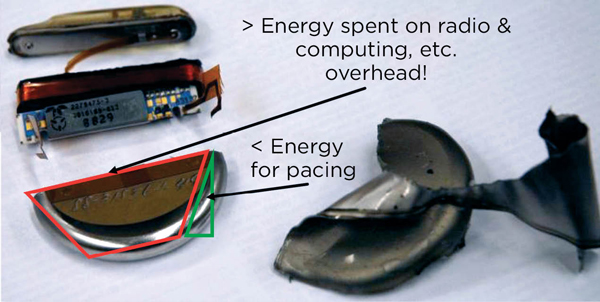

Dr. Ransford gave several examples of recent attacks on both devices and hospital networks that took advantage of lax security policies and protocols. For example, his lab analyzed an implantable cardioverter defibrillator (ICD) and found multiple avenues for attack. Modern pacemakers and ICDs are really small wireless computers, and most of the energy they use is devoted to radio and computation (Figure 1). Data are transmitted between the ICD and programmer in plain text or weakly encoded text. The ICD itself emits a radio signal that can be captured and reconstructed into recognizable vital signs. The control codes used by the programmer are easily decoded and programming commands easily spoofed using a software-defined radio. This allows for attacks such as programming the radio in the ICD to continuously transmit, thereby draining the battery and rendering the device nonfunctional. Perhaps most concerning was that much of this work was done over a decade ago, yet fundamental security flaws remain in these devices.1,2 Dr. Ransford ended his talk with a list of how clinicians can help improve security:

- Be informed.

- Push for organizational clarity. Be an advocate for strong and clearly defined policies and procedures for device and network security.

- Don’t make it worse. Follow best security practices.

- Make some noise. The adage “if you see something, say something” applies as much to health care as it does to public transportation.

New Developments in Simulation And Learning

Recent advances in screen-based, augmented and virtual reality simulation are causing a shift from high-fidelity, mannequin-based simulation to low- to medium-fidelity, screen-based simulation. Anjan Shah, MD, from the Icahn School of Medicine at Mount Sinai, in New York City, discussed how this change is being driven in part by the cost, space and physical presence requirements of high-fidelity simulation. In contrast, screen-based simulations can easily be made available online, so participants do not need to be physically present. Furthermore, screen-based simulations cost less, can be easily customized to the individual participant/learner, and provide instant feedback.

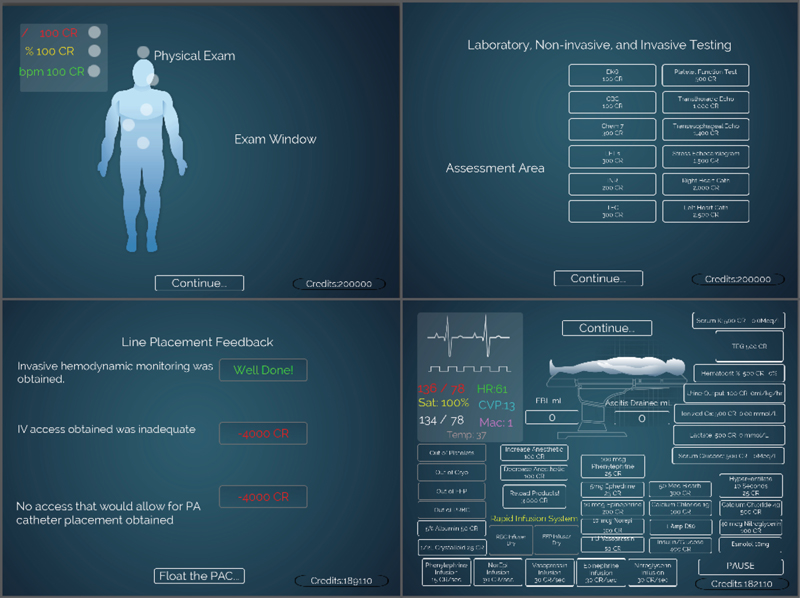

An important development in simulation is the use of VR and gaming, driven by the reality that millennial learners have a different, more visual and flow-based learning style. “Serious games” are interactive applications that leverage the self-reinforcement of video games with the purpose of knowledge acquisition. An interactive station allowed STA attendees to try an orthotopic liver transplant game that allows users to practice taking a patient through a liver transplant (Figure 2).3

Cole Sandau, the CEO of Health Scholars, talked about the advantages of moving simulation for rare or unusual experiences into VR. By taking an analog training and education process such as advanced cardiac life support (ACLS) and moving it into software, educators gain the ability to easily scale up training. For example, a large health care organization might need to maintain ACLS certifications for 10,000 providers. Virtual reality allows for the creation of fully immersive experiences that enable participants to practice, get certified and—more importantly—fail as many times as necessary, without incurring significant additional cost.

Although VR is exciting and attractive, it has some important limitations. The level of realism has to be sufficiently high for participants to suspend disbelief. The cognitive load of using it has to be minimal. Finally, current VR setups work best for workflow and decision-making skills and are less effective for fine motor skills and dexterity.

Emerging Device Technologies: Invention Versus Innovation

One of the most exciting developments of the past several years is the emergence of the so-called Internet of Things (IoT)—specifically, the addition of networking capabilities to small everyday devices. This has enabled the emergence of the “smart home” and soon, “Ward 3.0”—continuous wireless monitoring of postoperative patients on the floor.4 Another area in which IoT devices may prove valuable is in redesigning adult monitors for use in pediatric anesthesia, or more generally for home monitoring of children.

Peter Szmuk, MD, of UT Southwestern Medical Center, in Dallas, discussed the challenges involved in adapting adult devices. As with adults, FDA approval is challenging, although the FDA is “committed to supporting the development and availability of safe and effective pediatric medical devices.”5 Dr. Szmuk presented the example of a new device that calculates an Oxygen Reserve Index by comparing SvO2 with SaO2 (i.e., mixed venous oxygen saturation with oxygen saturation).6 This device is still not approved in the United States despite more than two years of review by the FDA and a successful pilot study.

Companies have skirted the issue by avoiding making claims of medical efficacy, particularly for the consumer market. For example, there are now two commercially available smartphone-connected baby monitors that use pulse oximetry. The requirement for FDA approval was avoided by not claiming the devices can prevent sudden infant death syndrome. Researchers from Children’s Hospital of Pennsylvania demonstrated that these devices perform poorly compared with a medical-grade pulse oximeter.7,8

Challenges of Next-Generation Anesthesia Information Management Systems

A large amount of discussion at this year’s meeting centered on the challenges being faced by all anesthesiologists with the transition to next-generation intraoperative anesthesia information management systems. Although these new anesthesia EHRs offer the advantage of integration with the main hospital EHR, many suffer from a one-size-fits-all rather than a best-of-breed approach. In other words, the charting needs for the perioperative environment are often very different from those of a nurse or a physician on the floor, yet the perioperative provider is asked to use the same tools and interface. This discrepancy can have harmful effects on efficiency.

A 2017 study by McDowell et al showed that implementation of a new EHR resulted in a significant increase in operating room turnover time from 53 to 63 minutes during the first month after implementation.9 This difference persisted for at least five months after implementation.

Nevertheless, these intraoperative EHRs are under active development and do offer some compelling tools and uses made possible by their tight integration with the main hospital EHR. Patrick Guffey, MD, of Children’s Hospital Colorado in Aurora, opened the session by talking about situational awareness pathways and how next-generation EHRs allow easy visualization of data with built-in customizable reports and dashboards. Physicians want to do the right thing, but they need data to know where they are with respect to the gold standard. Furthermore, peer pressure can be highly motivational. Seeing how other clinicians are performing and where you stand in relation to them can be a very effective factor for influencing change. Dr. Guffey presented the results of the implementation of a spine protocol using in-EHR alerts and reminders. The median hospital length of stay was reduced, with median discharge on postoperative day 3.10 In addition, there were no surgical site infections for three years.

A key way in which anesthesiologists can take control of their fate in the new world of integrated EHRs is to become a “physician builder.” Jonathan Wanderer, MD, of Vanderbilt University Medical Center in Nashville, Tenn., discussed the value of being a physician builder, his experience and how to obtain such status. Physicians need to complete over 20 hours of training at the headquarters of Epic Systems (Verona, Wis.) before being granted certification as a physician builder.11 Upon certification, physicians are able to independently make modifications to their Epic installation. This can be tremendously empowering to departments and groups, as it restores some of the autonomy so necessary for responding to constantly changing practice and medicolegal requirements. Working in a single unified system, however, is both a pro and a con, as your build can affect other users. Ultimately, Vanderbilt was able to rebuild most of the functionality of their former in-house, best-of-breed system within Epic or adapt their old systems to work with data from Epic. This is encouraging news for practices that rely heavily on custom solutions for workflow efficiency.

Patrick J. McCormick, MD, of Memorial Sloan Kettering Cancer Center, in New York City, closed out the session with a presentation titled “Anesthesiologist-Augmented Intelligence.” Beginning with a brief overview of what augmented intelligence means in other fields and in the context of computing in general, Dr. McCormick narrowed his focus to recent examples of how software has been used to give anesthesiologists enhanced information at their fingertips as well as decision support.

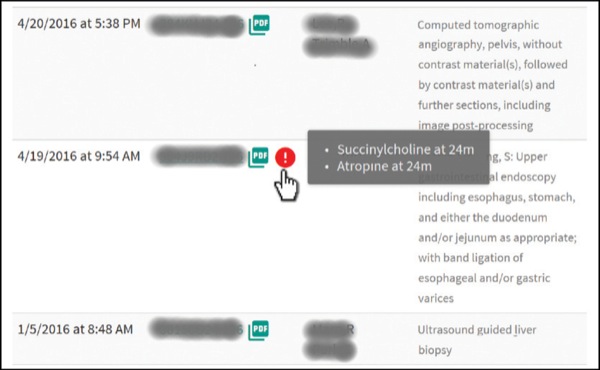

He presented the findings of Wax et al, who showed how implementation of an automated critical event screening and notification system significantly increased the preoperative review of previous anesthetic records by more than 50% (59% vs. 39% of records reviewed; Figure 3).12 In contrast, a randomized controlled trial that attempted to decrease perioperative mortality by alerting providers to episodes of combined low blood pressure and low bispectral index (a so-called “double low”) failed to show a significant effect.13

These results highlight how decision support is often good at improving compliance with process measures, but not necessarily as good at improving outcomes—particularly since the contemporary incidence of perioperative morbidity and mortality attributable to anesthesia is, overall, quite low. The double low study did show, however, that patients who had more than 60 minutes of cumulative double low time were twice as likely to die as others (hazard ratio, 1.99; P=0.005). This suggests that there is value in monitoring these higher-level trends. A more recent similar study of a graphical monitoring system (AlertWatch) again showed that while process measures improved, postoperative clinical outcomes did not differ significantly between the control and intervention groups.14

Finally, Dr. McCormick ended his talk with a note of warning best summarized by the old adage, “If all you have is a hammer, everything looks like a nail.” If every attempt to augment provider intelligence is implemented by adding icons and buttons to the EHR, you end up with icon overload and forget what all of them mean.

Machine Learning: The Future of Perioperative Analytics?

Perhaps the most exciting technology trend in anesthesiology in the past few years has been the arrival of machine-learning techniques as a new paradigm for data-driven perioperative research. Maxime Cannesson, MD, PhD, from the UCLA Health System, talked about the “tyranny of metrics” imposed by regulatory and compliance agencies, which is being reinforced by large, monolithic EHRs.15 Organizations have become so focused on quantifying and measuring performance that they have lost sight of what is being measured. Yet discrete EHR data are only a small portion of a person’s health data. There are many other types of data, such as waveforms, imaging, genomics and environmental data. These complicate the relatively simple EHR data, rapidly exceeding the computing ability of traditional analysis.

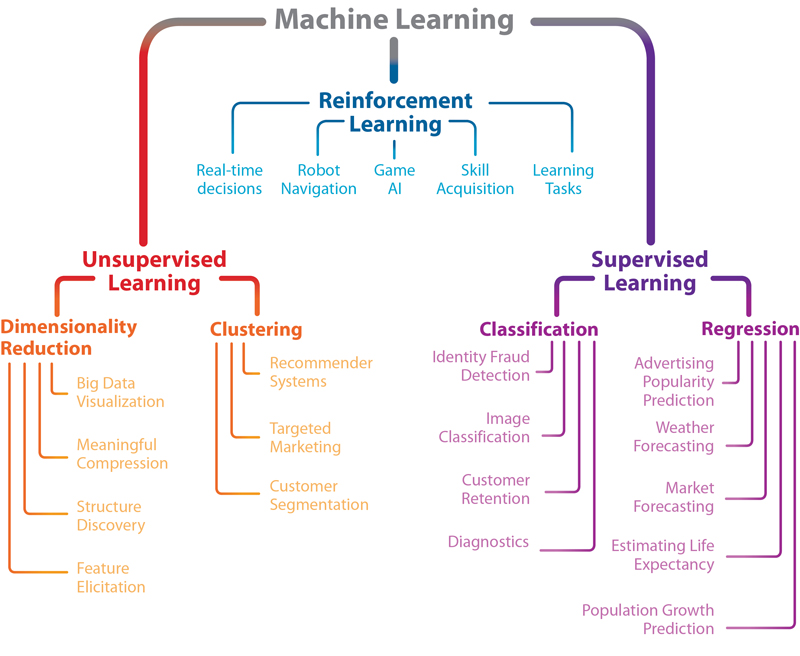

Successfully using all of this big data to not just report but learn and predict requires new techniques. In this context, Christine Lee, the senior engineer at Edwards Lifesciences, gave an overview of machine learning (Figure 4). She walked the audience through the typical steps required for a successful analysis, from data preparation and cleaning to feature selection, training and finally testing model performance. In a sense, this is no different from any traditional statistical analysis. What does differ is the type of algorithms employed and the scale. Deep neural networks and convolutional neural networks use pattern recognition to learn what inputs are associated with a specified output. The power of these networks is that complex intra-variable relationships can be implicitly modeled, and feature reduction (the process of choosing the most important prediction variables) can be automated. An example of an intra-variable relationship that is not easy to model using traditional techniques would be the effect of prior blood pressure trends on current blood pressure.

For example, Lee et al demonstrated that a deep neural net trained on intraoperative data (drugs, fluids, medications) can predict postoperative in-hospital mortality with excellent performance, comparable to or better than a carefully tuned logistic regression model, but better able to implicitly account for nonlinear associations between intraoperative variables.16 Similarly, Hatib et al used machine-learning techniques to optimize an algorithm designed to predict hypotension using over 3,000 features extracted from arterial waveform data.17 This is an excellent example of the value of machine learning, as it would simply not be possible for a clinician or statistician to conceptualize, derive and evaluate such a large number of features.

Dr. Cannesson also explained how classic risk prediction models do not always make ideal risk scores. For example, Sessler et al’s Risk Stratification Index (RSI) has excellent performance (c-statistic, or concordance, of 0.98 for in-hospital mortality) but relies entirely on post-discharge administrative data (i.e., International Classification of Diseases, 9th or 10th Revision codes).18This renders the RSI unsuitable for use during or prior to a hospital admission. The ideal risk score would be accurate like the RSI, but also easy to implement (which does not equate to simple) at the point of care so that it is specific and patient-centered. Dr. Cannesson concluded his talk by reminding the audience that using fancy techniques on simple data does not have much benefit over using simple techniques.

Next, Samir M. Kendale, MD, of NYU Langone Health, in New York City, presented his work on predicting post-induction hypotension using machine-learning techniques.19 He spoke about some of the challenges involved in building machine-learning models. First is the concept of data leakage—using data for training the model that wouldn’t be available in the real world or in a validation data set. Data leakage, particularly when trying to predict rare events, can lead to “overfitting,” wherein the model performs better in training than it would in the real world. His research demonstrated that even though post-induction hypotension seems to an experienced clinician like an easy event to predict, it is actually quite difficult. Machine-learning techniques performed the same as or only marginally better than classic logistic regression.19 This highlights the challenge of using this approach intraoperatively.

Ira S. Hofer, MD, of UCLA Health System, presented a framework for using artificial intelligence and machine learning in the perioperative period. He discussed the importance of patient phenotyping and presented his work on automated calculation of the Revised Cardiac Risk Index.20 Automated phenotyping and risk stratification are critical for deploying smart algorithms. Dr. Hofer, too, emphasized that big data is not enough—the data have to become smart data. He ended by demonstrating the smart screening tool that UCLA is using to triage patients to a virtual preoperative visit and inpatient visit, or no preoperative consult at all.21 This tool synthesizes data from the EHR and presents them in an easy-to-use dashboard.

Quantum Computing: The Future of the Future?

The final academic session at this year’s meeting was the engineering challenge, an annual tradition. Aspiring students and residents compete to solve a difficult technical problem. This year’s challenge was to use quantum computing techniques to optimize staffing assignments. This is a classic example of a constrained optimization problem, an area in which there has been considerable interest in using quantum methods.22 The goal is to minimize the number of handoffs, place providers in their area of expertise, and ensure a fair and equitable relief order. Participants presented a variety of solutions using several different cloud quantum-computing platforms. For the simple optimization problem presented—four anesthesiologists with a given relief order, having to cover three rooms—the typical run time for the quantum optimizer was 15 to 20 milliseconds. In the end, all participants were declared winners.

Conclusion

Now is an exciting time to be a researcher and clinician in perioperative medicine and anesthesiology. Machine learning and other technologies are rapidly penetrating into the health care space, and as always, anesthesiologists are well positioned to become leaders and innovators. We welcome you to join us next January in Austin, Texas, for the 2020 annual meeting.

References

- Ransford B, Kramer DB, Foo Kune D, et al. Cybersecurity and medical devices: a practical guide for cardiac electrophysiologists. Pacing Clin Electrophysiol. 2017;40(8):913-917.

- Halperin D, Heydt-Benjamin TS, Ransford B, et al. Pacemakers and implantable cardiac defibrillators: software radio attacks and zero-power defenses. 2008 IEEE Symposium on Security and Privacy. doi:10.1109/SP.2008.31

- Katz D, Zerillo J, Kim S, et al. Serious gaming for orthotopic liver transplant anesthesiology: a randomized control trial. Liver Transplant. 2017;23(4):430-439.

- Michard F, Sessler DI. Ward monitoring 3.0. Br J Anaesth. 2018;121(5):999-1001.

- FDA. Pediatric medical devices. www.fda.gov/?medical-devices/?products-and-medical-procedures/?pediatric-medical-devices. Accessed July 1, 2019.

- Szmuk P, Steiner JW, Olomu PN, et al. Oxygen Reserve Index: a novel noninvasive measure of oxygen reserve—a pilot study. Anesthesiology. 2016;124(4):779-784.

- Bonafide CP, Jamison DT, Foglia EE. The emerging market of smartphone-integrated infant physiologic monitors. JAMA. 2017;317(4):353-354.

- Bonafide CP, Localio AR, Ferro DF, et al. Accuracy of pulse oximetry-based home baby monitors. JAMA. 2018;320(7):717-719.

- McDowell J, Wu A, Ehrenfeld JM, et al. Effect of the implementation of a new electronic health record system on surgical case turnover time. J Med Syst. 2017;41(3):42.

- Guffey P. Improving the quality of your practice: CRASH 2016. Colorado Review of Anesthesia for SurgiCenters and Hospitals. www.ucdenver.edu/?academics/?colleges/?medicalschool/?departments/?Anesthesiology/?crash/?crasharchives/?Documents/?February 29, 2016/06-Guffey CRASH 2016.pdf. Accessed July 1, 2019.

- Drees J. The value of physician builders + compensation models: Q&A with Peninsula Regional Medical Center CMIO Dr. Mark Weisman. Becker’s Hosp Rev. January 31, 2019. www.beckershospitalreview.com/?healthcare-information-technology/?the-value-of-physician-builders-compensation-models-q-a-with-peninsula-regional-medical-center-cmio-dr-mark-weisman.html. Accessed July 1, 2019.

- Wax DB, McCormick PJ, Joseph TT, et al. An automated critical event screening and notification system to facilitate preanesthesia record review. Anesth Analg. 2018;126(2):606-610.

- McCormick PJ, Levin MA, Lin H-M, et al. Effectiveness of an electronic alert for hypotension and low bispectral index on 90-day postoperative mortality: a prospective, randomized trial. Anesthesiology. 2016;125(6):1113-1120.

- Kheterpal S, Shanks A, Tremper KK. Impact of a novel multiparameter decision support system on intraoperative processes of care and postoperative outcomes. Anesthesiology. 2018;128(2):272-282.

- Muller JZ. The Tyranny of Metrics. Princeton, NJ: Princeton University Press; 2018.

- Lee CK, Hofer I, Gabel E, et al. Development and validation of a deep neural network model for prediction of postoperative in-hospital mortality. Anesthesiology. 2018;129(4):649-662.

- Hatib F, Jian Z, Buddi S, et al. Machine-learning algorithm to predict hypotension based on high-fidelity arterial pressure waveform analysis. Anesthesiology. 2018;129(4):663-674.

- Sessler DI, Sigl JC, Manberg PJ, et al. Broadly applicable risk stratification system for predicting duration of hospitalization and mortality. Anesthesiology. 2010;113(5):1026-1037.

- Kendale S, Kulkarni P, Rosenberg AD, et al. Supervised machine-learning predictive analytics for prediction of postinduction hypotension. Anesthesiology. 2018;129(4):675-688.

- Hofer IS, Cheng D, Grogan T, et al. Automated assessment of existing patient’s revised cardiac risk index using algorithmic software. Anesth Analg. 2019;128(5):909-916.

- Stewart A. UC Los Angeles physicians are using telemedicine to transform the perioperative experience—Here’s how. Becker’s ASC Review. October 30, 2018. www.beckersasc.com/?anesthesia/?uc-los-angeles-physicians-are-using-telemedicine-to-transform-the-perioperative-experience-here-s-how.html. Accessed July 1, 2019.

- Clavin W. Developing quantum algorithms for optimization problems. July 26, 2017. https://phys.org/?news/?2017-07-quantum-algorithms-optimization-problems.html. Accessed July 1, 2019.

Leave a Reply

You must be logged in to post a comment.